Introduction

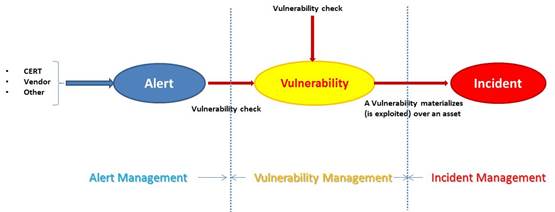

There’s lots of language challenges when talking about alerts, vulnerabilities and incidents. It’s a common error to speak about vulnerabilities when you are really referring to “vulnerability alerts”. Furthermore, a common confusion comes from talking about “incidents” when a vulnerability is found in a system. A vulnerability can cause an incident when exploited – it’s not an incident in itself.

In the contrary, talking about vulnerabilities when an incident happened. Let’s look at the different stages of managing “vulnerability alerts”, vulnerabilities and incidents and how to manage them in a coordinated way.

Definitions

Alerts

Vulnerability Alerts, or more commonly “Alerts” are warnings that you get from security intelligence alerting about the possibility that one or more of your IT assets could potentially be exploited through a weakness in the technology platform.

Alert Sources

For a person new to the security side of system administration, the hardest thing is to determine where to get their security information such as vulnerability alerts. There are many WEB sites offering “expert information”, but which ones do you trust?

The WEB site, which is generally accepted as a good starting point is the CERT (Computer Emergency Response Team) Coordination Center (CERT/CC).

The purpose of the CERT/CC is: “Study Internet security vulnerabilities, handle computer security incidents, publish security alerts, research long-term changes in networked systems, and develop information and training to help you improve security at your site.”

CERT/CC sites is considered an authoritative source of information by security and system administrative staff.

There are multiple CERT/CC organizations, one or more per country:

- CERT-EU for EU (also part of ENISA)

The drawback of relying on these sites is that you do not get the detailed vendor specific information. For this, you need to go to the vendor WWW sites. This is where it starts to get more complex. For a UNIX team, this may mean another 4 or more sites that have to be monitored, see table 1 below as sample:

|

Vendor |

Operating System |

WWW Site |

|

Sun |

Solaris |

|

|

HP |

HP-UX |

|

|

IBM |

AIX |

The vendors, in many cases, will not advise of a vulnerability unless it has been exploited or is likely to be exploited. It is not in their best interests to. None of the vendors listed above have direct links from their home page to any security-related problems with their software.

Now if you add in the products that run on these servers, such as databases (i.e. Oracle), WEB servers, Fire Walls and the problem gets larger. Then there are the Open Source products such as SAMBA, OpenSSH or Apache.

Why do you need to worry about them? The answer to this is simple, the security of the server is only as good as its weakest link. Privileged access can be gained via the Operating System or via an application. The attacker will exploit the weakest link.

The usual process is:

- Somebody (usually a “White hat”) discover a potential vulnerability and (if want to be taken seriously) a PoC of exploit.

- The vendor analyzes the vulnerability and in most cases recognizes the vulnerability issuing an alert bulleting

- The vendor publish the mitigation (aka patch) for the system according to his support policy or even out of cycle if the potential vulnerability is critical

Vulnerabilities

Vulnerability commonly refers to “the inability to withstand the effects of a hostile environment”.

For the context used in the software security industry, a vulnerability is a security exposure that results from a product weakness that the product developer did not intend to introduce and should fix once it is discovered.

From the practical perspective, a vulnerability is the intersection of three elements: a system susceptibility or flaw, attacker access to the flaw, and attacker capability to exploit the flaw. To exploit a vulnerability, an attacker must have at least one applicable tool or technique that can connect to a system weakness (aka, an “exploit”). A vulnerability is also known as the “attack surface”.

Security Incidents

From ISO 27001/2005: “An information security incident is made up of one or more unwanted or unexpected information security events that could very likely compromise the security of information and weaken or impair business operations”.

“An information security event indicates that the security of an information system, service, or network may have been breached or compromised. An information security event indicates that an information security policy may have been violated or a safeguard may have failed”.

So one (but not solely) causes of a security incident is when a vulnerability materializes over an asset and creates a security event

The vulnerability lifecycle.

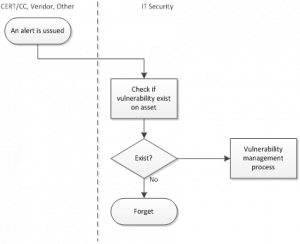

The process start when some entity provides an alert related to some typology of asset or group of assets.

After this point, the alert management process ends and start the vulnerability management process:

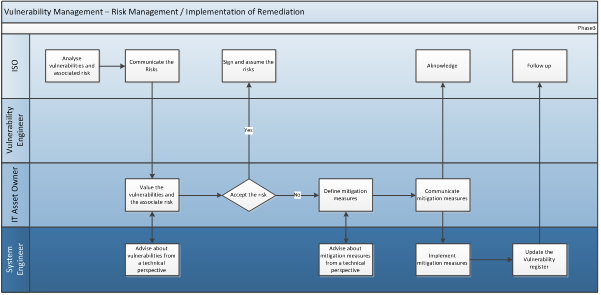

- Analyze vulnerabilities and associated risks. The ISM will assess the different vulnerabilities found and will categorize the different risks depending on CVSS provided by Vulnerability Scanner.

- Communicate the Risk. The ISM will send a report with the found vulnerabilities with associated CVSS to The IT Asset Owner, and a link to the Vulnerability Register (Appendix 1).

- Value the Vulnerabilities and the associated risk. The IT Asset Owner, having final responsibility for the system, will evaluate the Risk using the Risk assessment methodology referenced and determine whether to assume the risk, mitigate the risk (applying the proper mitigation measures) or transfer the risk.

- Assuming the risk means the IT Asset Owner will be fully responsible and accountable in case an incident happens, causing an impact in the asset or in other related assets due to the lack of mitigation of the risk associated to the vulnerability of the asset.

- Risk associated to highest level of vulnerabilities cannot be assumed, must be always mitigated in a given timeframe.

- In the case of risk acceptance, the IT Asset Owner will sign the Vulnerability Risk Acceptance form (Appendix 4), and add a note to it in the Vulnerability Register (Appendix 3) formally accepting ownership of the risk.

- Defining the Mitigation Measures: The IT Asset Owner in accordance with the ISM will define the mitigation measures required to reduce the risk.

- The mitigation measures will be implemented inside of the timeframe defined in the Remediation Priority Table (Appendix 2).

- Implement mitigation Measures. The System Engineer (or more than one) will implement the mitigation measures designed by the IT Asset Owner, in the timeframe given for that, unless the risk had been assumed or transferred and always in case of critical vulnerabilities.

- Once the mitigation measure has been taken, the System Engineer will update the Vulnerability Register (Appendix 3), explaining the measures taken.

This vulnerability management process can be trigged also as result of a (periodic) vulnerability scan:

One important element of this management process is the RACI Matrix:

|

Phase |

Activity |

ISO |

Vulnerability Engineer |

IT Asset Owner |

System Engineer |

|

PREPARATION |

Define the Scope |

R |

|

|

|

|

Communicate and propose a timeframe |

R |

|

|

|

|

|

Discuss/Agree the planned scan |

R |

|

C |

|

|

|

Communicate to the affected parties |

R |

|

I |

I |

|

|

VULNERABILITY SCAN |

Start the Scan |

A |

R |

|

|

|

Monitoring Stability of the System been Scanned |

A |

I |

|

R |

|

|

Reconfigure the Scan |

A |

R |

|

|

|

|

Receive and pre-process the Scan results |

A |

R |

|

|

|

|

Communicate the Results |

I |

R |

I |

|

|

|

RISK REMEDIATION |

Analyze vulnerabilities and associated risks |

R |

|

|

|

|

Communicate the Risk |

R |

|

|

|

|

|

Value de Vulnerabilities and the associated risk |

|

|

R |

C |

|

|

Defining the Mitigation Measures |

|

|

R |

C |

|

|

Communicate the Mitigation Measures |

I |

|

R |

I |

|

|

Implement mitigation Measures |

I |

|

I |

R |

|

R |

Responsible. Those who do the |

|

A |

Accountable. The one ultimately |

|

C |

Consulted. Those whose opinions |

|

I |

Informed. Those who are kept |

Incident management is a separate process and occurs when a vulnerability materializes over an asset. That means:

- An attacker successfully exploit a Vulnerability, causing an impact in the asset

- A normal operation triggers a vulnerability, causing an impact in the asset

In any case, an specific procedure is needed. A good starting point is ISO/IEC 27035:2011 which expand the original security incident section of ISO/IEC 27002.

This standard has three parts:

- ISO/IEC 27035-1: principles of incident management

This part outlines the concepts underpinning information security incident management and introduces the

remaining two parts.

In this first part, the incident management process is currently described in five phases:

- Plan and prepare: establish an information security incident management policy, form an Incident Response Team etc.

- Detection and reporting: someone has to spot and report “events” that might be or turn into incidents;

- Assessment and decision: someone must assess the situation to determine whether it is in fact an incident;

- Responses: contain, eradicate, recover from and forensically analyze the incident, where appropriate;

- Lessons learnt: make systematic improvements to the organization’s management of information security risks as a consequence of incidents experienced.

- ISO/IEC 27035-2: guidelines to plan and prepare for incident response (draft)

Part 2 concerns assurance that the organization is in fact ready to respond appropriately to information security incidents that may yet occur. It addresses the rhetorical question “Are we ready to respond to an incident?”

The content includes:

- Establishing information security incident management policy

- Updating of information security and risk management policies

- Creating information security incident management plan

- Establishing an Incident Response Team (IRT) [aka CERT or CSIRT]

- Defining technical and other support

- Creating information security incident awareness and training

- Testing the information security incident management plan

- Lesson learnt

- ISO/IEC 27035-3: guidelines for incident response operations (draft)

Part 3 offers guidance on managing and responding efficiently to information security incidents, using typical incident types to illustrate the approach. It also covers the establishment, organization and operation of the Incident Response Teams (IRTs).

Content: there are three main clauses covering

- IRTs (types, roles, structures, staffing);

- Incident response operations (incident criteria and response processes i.e. monitoring, detecting, assessing, analyzing, responding, reporting and learning lessons);

- Generic examples of incidents (such as denial of service and malware incidents). Annexes offer criteria for categorizing incidents and template forms.